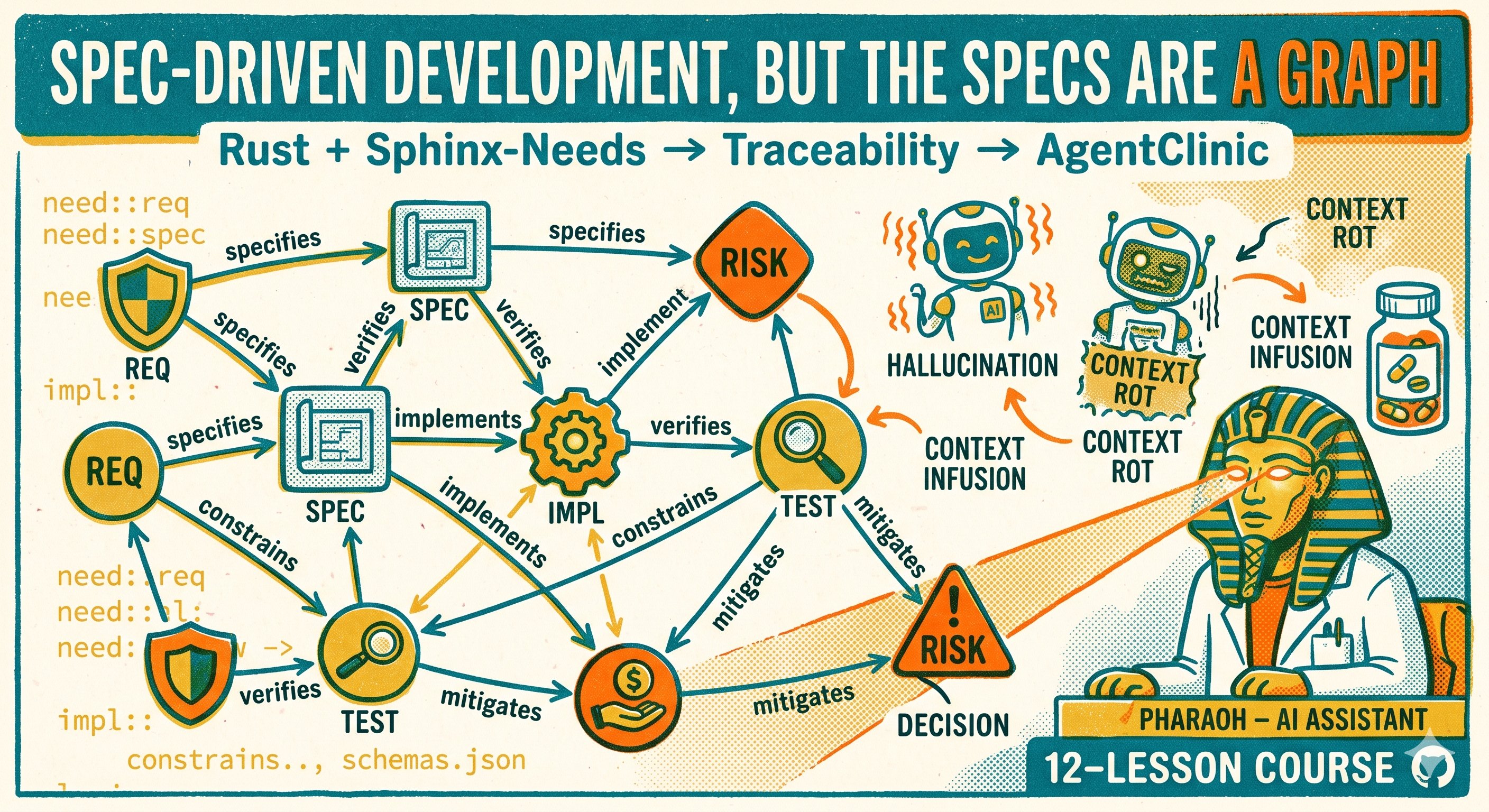

Your AI agents are productive. They write code, update specs, run tests. The tokens flow, the commits land, and everything looks fine — until someone asks a simple question: “Did we update everything that needed updating?”

Nobody can answer it. That’s the problem.

A benchmark worth reading

Erwin Roth from Audi ran a benchmark. Five coordination architectures for Claude Code. Same codebase, same scenario, four parallel agents. The task: propagate a single requirement change — brake response time from 100ms to 50ms — across 15 interconnected artifacts in an ISO 26262 safety-critical project. Requirements, specs, implementations, tests, architecture diagrams. Everything linked, everything needing an update.

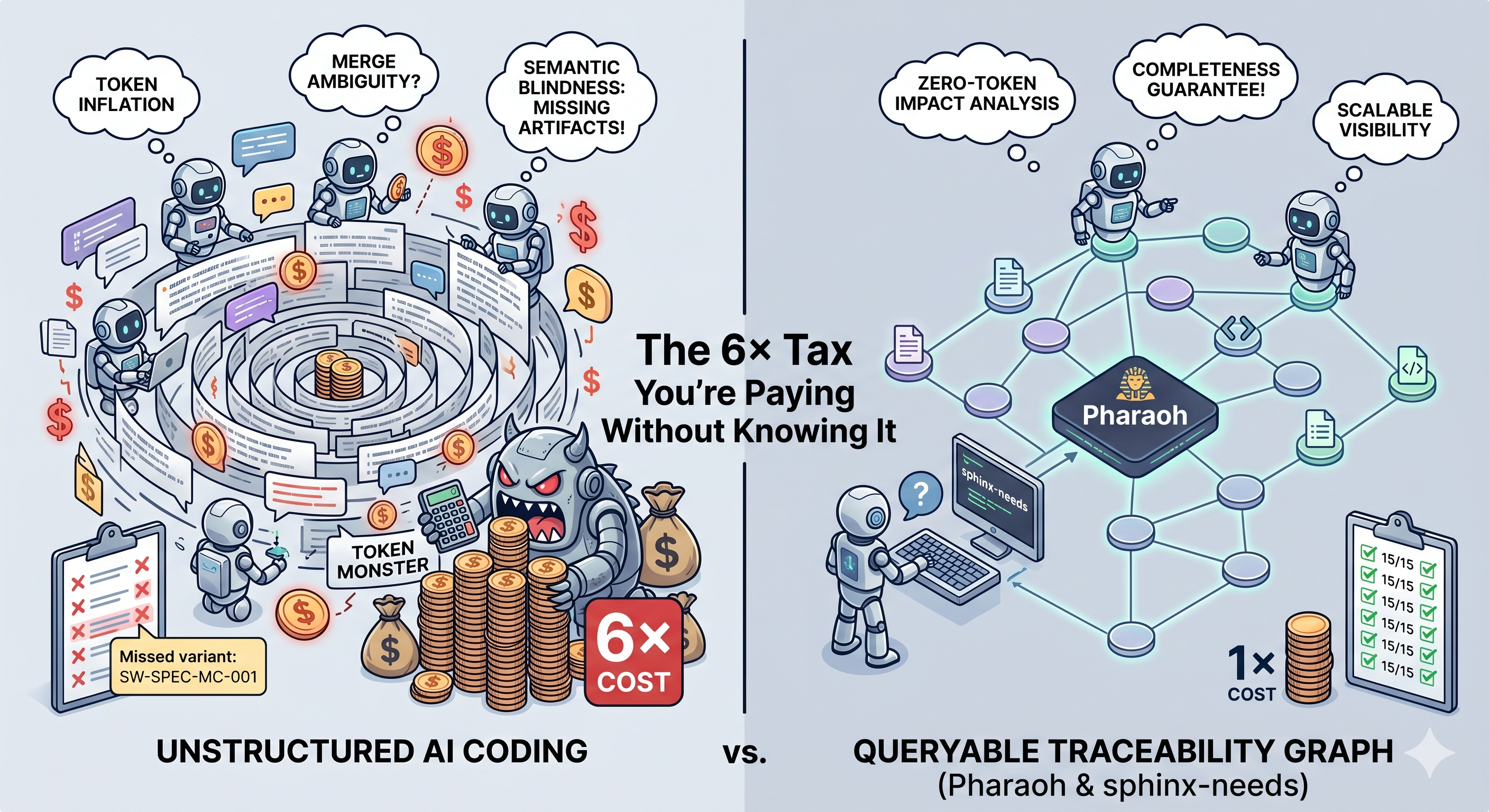

The simplest approach — Claude Code with a shared task list, no structural awareness — consumed 67,300 tokens. The most structured approach — Claude Code with Pharaoh, backed by sphinx-needs as its traceability graph — used 11,200 tokens.

That’s a 6x difference. And the expensive approach didn’t finish the job: it missed 4 out of 15 artifacts and produced ambiguous state that required manual intervention.

Three failure modes

Roth’s benchmark identified three failure modes. They don’t announce themselves. They just drain your budget and leave gaps.

Token inflation. Every agent turn reloads full project context. With a shared task list, that context grows with every completed step. Cost scales as O(turns x agents). In the benchmark, input tokens per turn stayed nearly flat for Pharaoh — around 1,000 from start to finish — while the unstructured approach climbed from 2,000 to over 5,500 per turn. Multiply by four agents and dozens of turns and you’re paying for the same information over and over.

Merge ambiguity. Multiple agents write to the same task log. “Agent REQ updated SW-SPEC-001.” “Agent SPEC updated SW-SPEC-001.” Now the master agent needs extra disambiguation passes to figure out who did what. This actually happened in the benchmark: two agents claimed ownership of the same artifact, and the coordination state became ambiguous mid-run. Not a theoretical risk. A recorded observation.

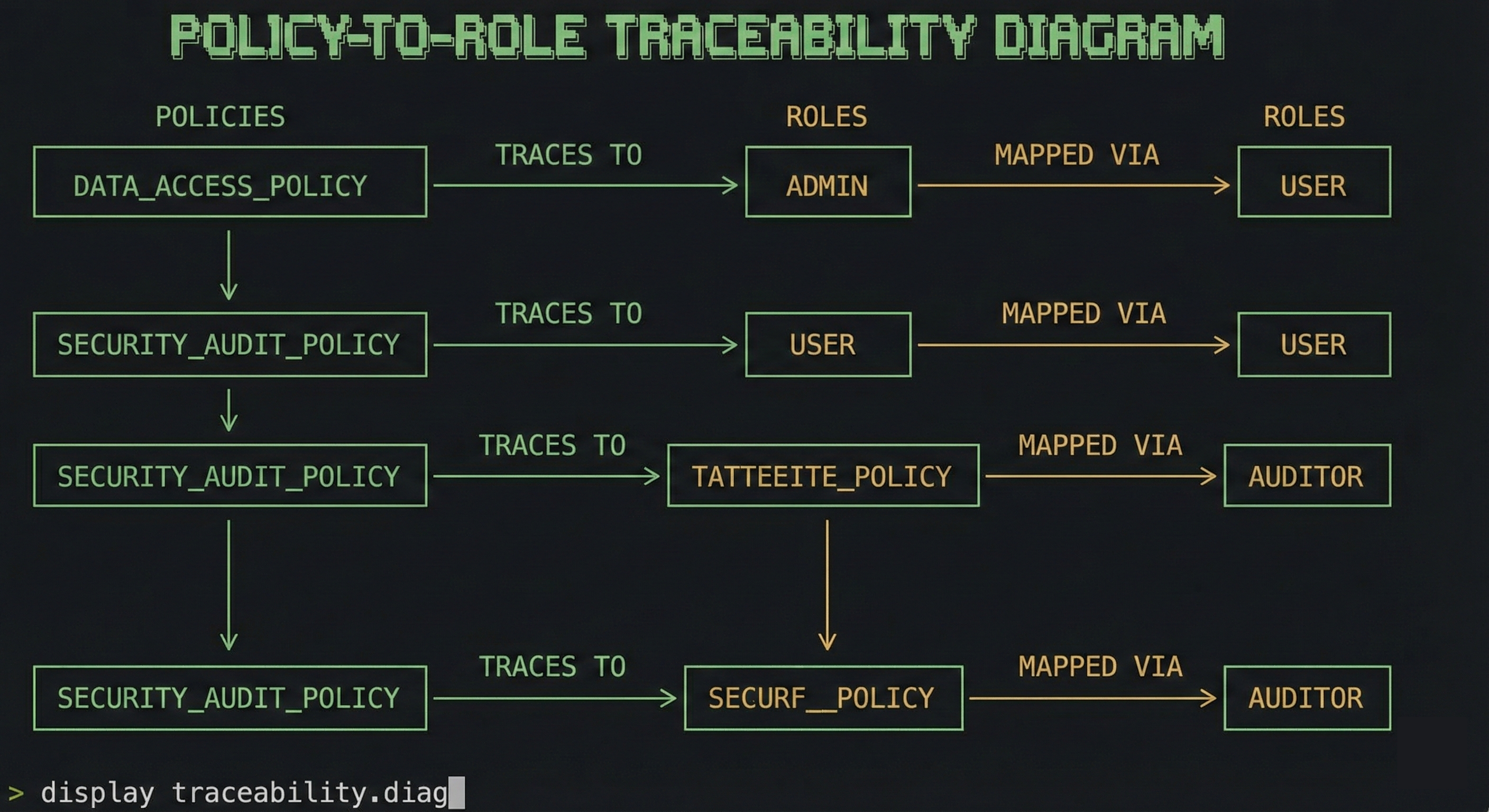

Semantic blindness. A shared task list tells agents what to do next. It does not tell them what it means for a task to be complete. Without the traceability graph — which requirements derive from which, which specs belong to which vehicle variant, which architecture diagrams connect via code links — agents can’t discover artifacts they weren’t told about. Six of the 15 artifacts required variant awareness or codelink traversal to find. Approaches without graph traversal missed some or all of them.

The gap is structural

Here’s what makes the numbers uncomfortable: the gap isn’t about prompt quality. It’s about architecture.

CC + Superpowers, with carefully authored plan documents, scored 14 out of 15 — impressive, but it missed one motorcycle variant spec. The plan author didn’t know to include it. CC + spec2cloud scored 13. CC + Gastown/Beads scored 12.

Pharaoh scored 15 out of 15. Every artifact. Every variant. Every architecture link.

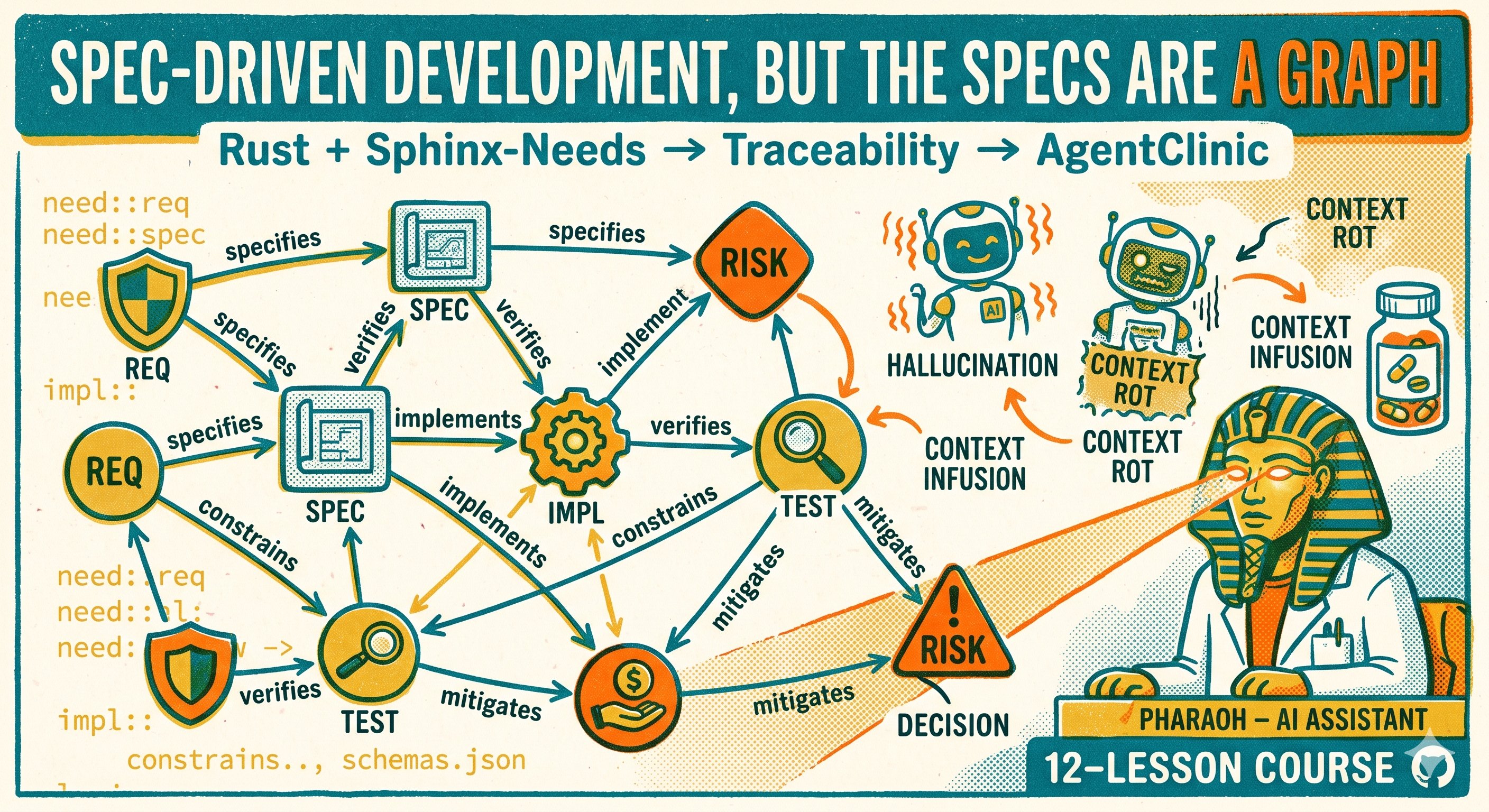

The mechanism is simple. Pharaoh doesn’t rely on anyone enumerating what needs to change. It traverses the traceability graph in sphinx-needs and returns the full impact set deterministically — before a single LLM token is spent. One changed field in SYS-REQ-001 produces 13 impact chains, all enumerated by ubc diff --impact at zero token cost. The agent works through that scoped set, loading only the relevant subgraph for each artifact level.

The completeness ceiling for plan-based approaches is set by what the plan author knew to write down. The completeness ceiling for graph-based approaches is set by the structure of the graph itself. One depends on human foresight. The other depends on data.

“But we’re not building safety-critical systems”

Most software projects don’t need ISO 26262. But the scenario — propagating a parameter change across interconnected artifacts — is universal. Every project with requirements that trace to specs that trace to code that trace to tests has this problem. The difference: in non-safety-critical projects, nobody audits whether you got it right. The missed artifacts become bugs, inconsistencies, or technical debt that surfaces weeks later.

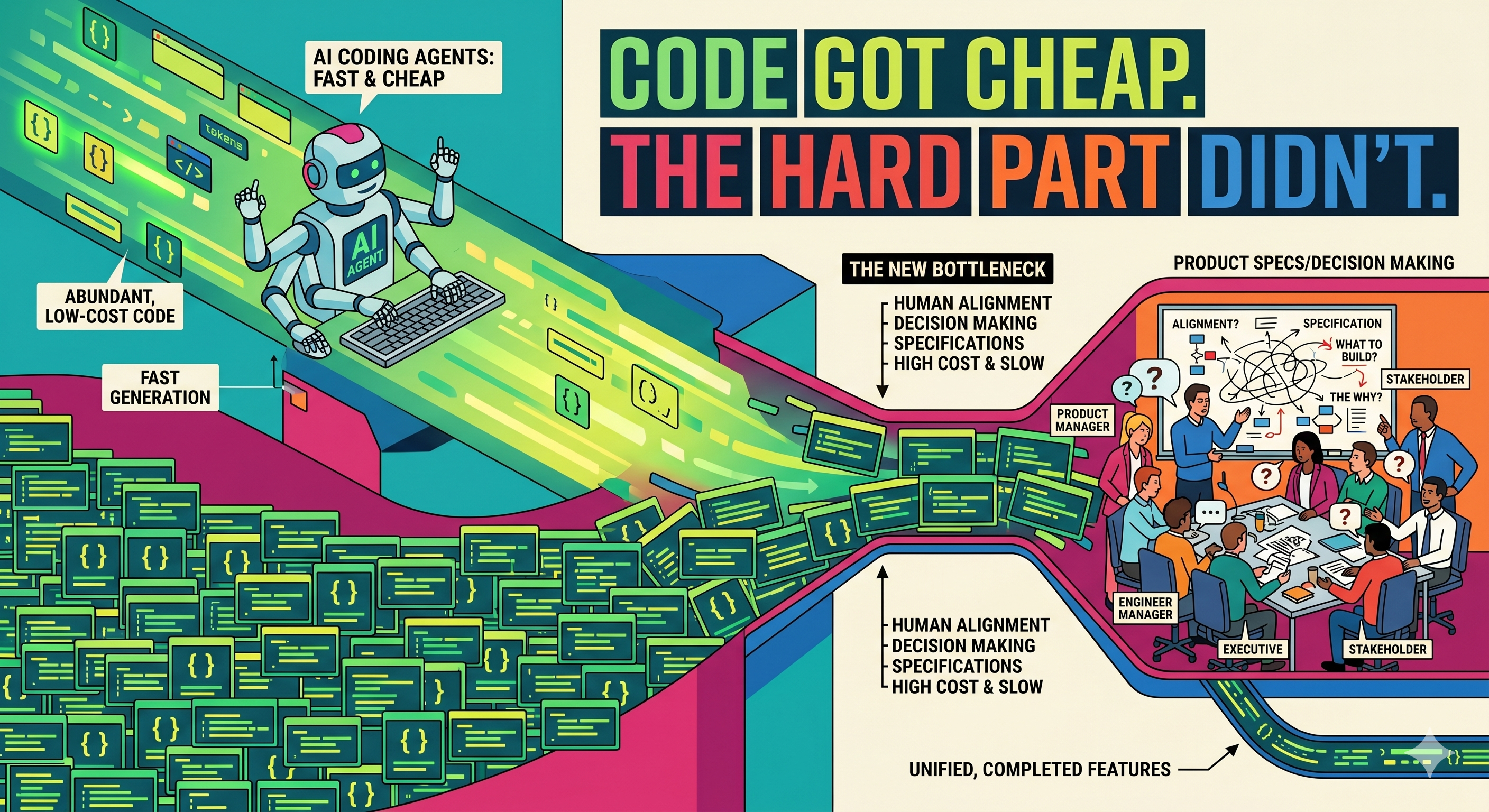

Georg Doll made this point at the useblocks User Conference: coding is 14% of a developer’s work time. The rest is understanding what a system is supposed to do, keeping documentation aligned with code and tests, and handling reviews and audits. AI agents handle the 14% well. Without structural awareness, they’re working blind on the other 86%.

Every project that uses AI agents is already paying the unstructured tax. You’re burning tokens on context reloads, disambiguation passes, and verification steps that wouldn’t exist if your agents could query a traceability graph instead of parsing a growing chat history.

What it looks like in practice

If you’re writing requirements in any form — markdown files, Jira tickets, RST — you’re halfway there. sphinx-needs lets you express those requirements as structured, linked objects with typed relationships. ubcode gives you the tooling to build, query, and validate those objects from the command line. Together, they turn your specs into a graph that both humans and machines can traverse.

Once your specs are in that graph, the economics change. The benchmark’s Pharaoh approach — pharaoh — is one way to exploit the graph: it uses ubc diff --impact to enumerate the full impact set before any LLM token is spent, then dispatches scoped work per artifact level. That’s what got it to 15 out of 15 at a sixth of the token cost.

But you don’t need Pharaoh to benefit. The graph is the point, not any particular tool built on top of it. You could feed the output of ubc diff --impact into your existing agent prompts — Superpowers plans, spec2cloud FRDs, whatever coordination approach you already use — and close most of the completeness gap. The plan author who missed the motorcycle variant spec would have caught it if the impact set had been in the prompt. The agents that burned tokens on context reloads would have loaded less if they’d been scoped to the relevant subgraph.

None of that requires your agents to be smarter. It requires your data to be structured.

Doll’s framing is right: the best model with bad context loses to a mediocre model with precise specs. The benchmark proves it with numbers. sphinx-needs and ubcode turn your specs into the kind of precise, queryable context that agents actually need.

The bottom line

You’re paying a 6x tax. Not because your agents are bad, but because they don’t have a map of what’s connected to what.

The fix is spec-driven development. Write your requirements as structured, linked objects in sphinx-needs. Use ubcode to query, diff, and validate them. When a requirement changes, ubc diff --impact tells you — deterministically, at zero token cost — what else needs to change. Feed that into whatever agent coordination you already use.

You can build specialized tooling on top of the graph — pharaoh does exactly that. Or you can add the impact set to your Superpowers plans and get most of the benefit with no new tools in the loop. The point is the same either way: give your agents a structured graph instead of a growing chat history, and the completeness gap closes.

The overhead of setting it up is real. The alternative — burning tokens on context reloads, missing variant-derived artifacts, manually disambiguating shared state — is more expensive and less reliable.

Try it on a real requirement change. Not a demo. Not a single-file toy example. A change that touches requirements, specs, code, and tests across variants. That’s where the difference shows.

The benchmark data in this post is from Erwin Roth’s “Structured Traceability at Scale” report (Audi, March 2026). The Spec-Driven Development framework is from Georg Doll’s presentation at the useblocks User Conference (Microsoft, April 2026). Both are publicly available.